BrandSafety

TrustAndSafety

Multi-modal AI

DigitalAdvertising

VideoUnderstanding

Why Brand Advertising Can No Longer Ignore AI Safety

2026. 3. 12.

19.3% of Ad Budgets Are Flowing Into Unsafe Placements

Digital advertising grew on the promise of reach and efficiency. The goal was simple: deliver messages to more users faster and more precisely. Digital transformation made this possible, turning brand advertising into a data-driven industry.

But digital ads do not exist in a vacuum. They are always placed alongside specific content and consumed within that context. The same ad can leave a completely different impression depending on the video, article, or moment it appears in.

In this environment, "Brand Safety" has become a critical challenge. When ads appear next to inappropriate or extreme content, they can unintentionally damage a brand’s hard-earned trust and reputation. Platforms have responded by tightening policies and upgrading monitoring systems. This shift was necessary as the video ecosystem scaled.

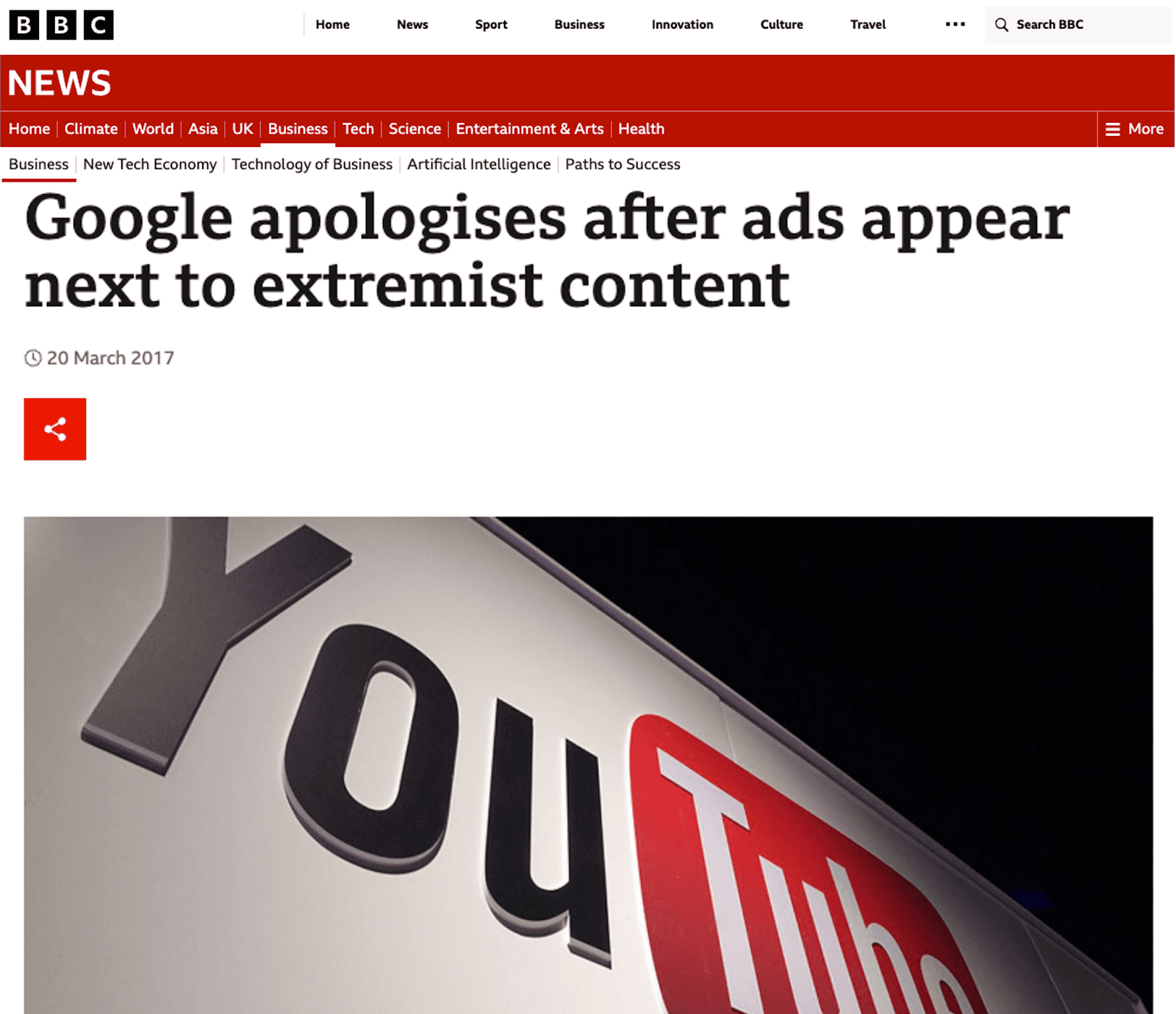

In 2017, ads from major global consumer brands appeared alongside extremist videos on YouTube. Following this, Google introduced policies like "Limited Monetization" (the Yellow Icon) to better manage the ecosystem. (Source: BBC)

While not perfect, video platforms built their own management systems. However, these systems were designed to manage “human-made content(UGC)”. Today, that fundamental premise is shifting.

The Rise of AI: The Balance Between Creation and Management Collapses

In the early days of Generative AI, companies focused on speed and productivity. The main conversation was about how fast AI could generate content and how efficiently it could raise the baseline of production.

However, the 2026 International AI Safety Report, marks a major turning point. Co-authored by experts like Professor Yoshua Bengio and Stephen Clare, this report points to "systemic risks." It warns that as general-purpose AI spreads across society and the economy, the risks go far beyond simple misuse.

At the heart of this issue is the "Evaluation Gap." AI models are tested before release, but it is difficult to predict their logic and behavior in real-world environments. Dangerous capabilities often emerge only after large-scale deployment. In a fast-moving digital ecosystem, detection tools and warning labels often lack consistency and effectiveness.

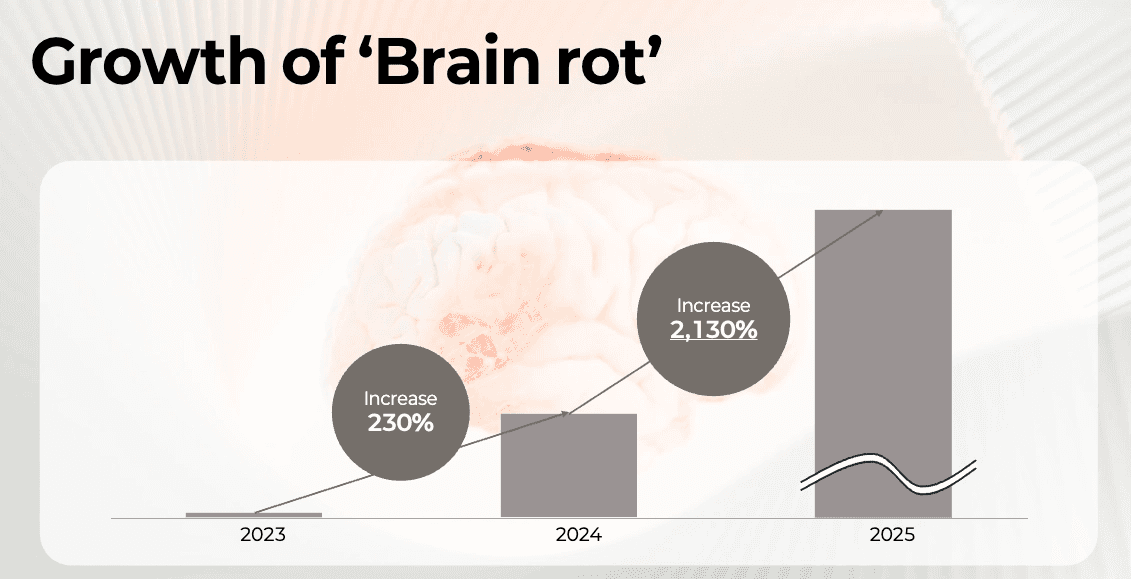

This structural imbalance has led to a phenomenon called "AI Slop." Low-quality, mass-produced content is spreading faster than management and oversight can keep up. Over the past two years, mentions of ‘brain rot’ have surged more than 60x, reflecting rising concern about low-quality AI content. This trend reflects growing public anxiety

At Pyler, we see this as more than just an increase in sensitive content. It is the result of a structural asymmetry between the speed of generative technology and the speed of verification. We believe this is an area where safety standards must be established. This is no longer an abstract debate about governance; it is a clear economic risk for brands.

Mentions of the term "Brain Rot" have increased over 60 times in the last two years. This surge shows rising public concern over the rapid spread of low-quality, mass-produced AI content.

Cleaning Up the Video Ecosystem

Major UGC video platforms like YouTube, TikTok, and Instagram are now taking collective action. "Ecosystem purification" has become a top priority. Recently, 16 of the world’s top 100 "AI Slop" channels were permanently deleted. These channels had reached a staggering 4.7 billion total views.

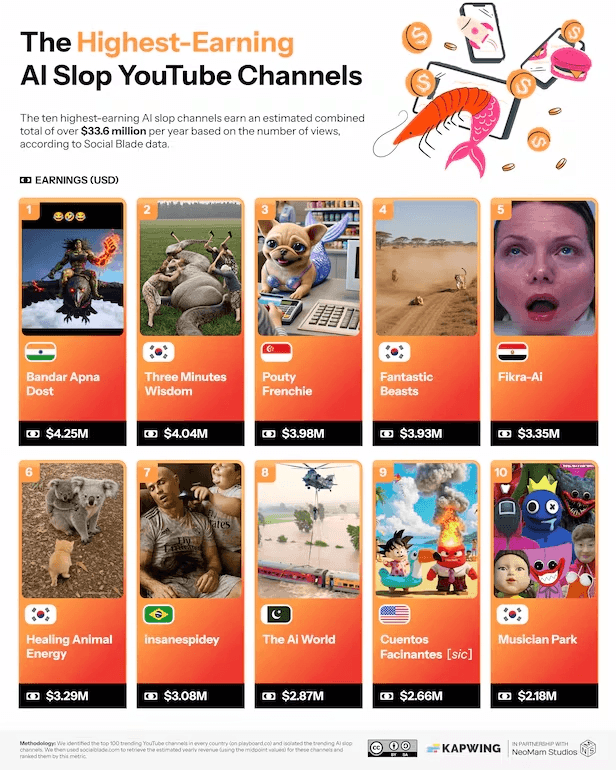

Video editing platform Kapwing analyzed the top 10 "AI Slop" YouTube channels by revenue. These channels generate millions of dollars in ad revenue, demonstrating the massive scale of mechanically produced content today.

This movement is not about suppressing creativity. In his 2026 annual letter, YouTube CEO Neal Mohan described this as an "inflection point" for the industry. He emphasized the need to distinguish genuine creative work from mechanical mass production designed solely for ad revenue.

However, simply detecting "AI-made video" is not enough. The core issue is not whether the creator is human or AI. What matters is the content itself and the viewer context around it.

Platforms can’t keep up. They review millions of uploads every day in real time. AI doesn’t just repeat old abusive patterns. It scales new formats and narratives fast.

The risks brands face are backed by data. Last year, Pyler used its Video Understanding AI to analyze over 40 million ad placements. We found that, on average, 19.3% of video ad budgets were spent on ads appearing next to sensitive or inappropriate content. For some specific campaigns, this figure exceeded 30%.

This isn’t just wasted spend. In the AI era, ad dollars can end up funding opaque or sensitive content. Brands need a structural solution.

The Fundamental Gap: Generation vs. Validation

The 2026 AI Safety Report identifies a core problem: AI’s generative capabilities are advancing rapidly, but safety systems are failing to keep pace. Dr. Roman Yampolskiy, a leading scholar in AI safety, describes this as the "gap between what AI can create and what we can control."

Today’s generative models produce nearly unlimited synthetic content. This content is distributed globally and instantaneously. In contrast, monitoring systems still rely on basic technology and reactive measures. They respond only after the risk has already entered the ecosystem.

The AI Safety Expert: These Are The Only 5 Jobs That Will Remain In 2030! - Dr. Roman Yampolskiy Dr. Roman Yampolskiy’s argument on how accelerating AI capabilities are redefining the limits of control, and why this shift matters far beyond the world of technology.

This is not a failure of generative AI companies. The core mission of companies like OpenAI is to build powerful generative engines. Their focus is on capability, performance, and fulfilling diverse prompts.

The difference between generation and validation is not just about speed; it is about structural roles. Generation expands possibilities. Validation judges the impact of those possibilities on society and the market. These two areas have fundamentally different purposes.

Ultimately, generation is about "possibility," while validation is the "safety mechanism" that evaluates market impact. In an environment where billions of hours of content are uploaded, relying solely on the internal safety nets of model developers or platforms is no longer enough. The need for an independent "Validation Layer" has become clear.

The Cost of Missing Validation: How "Brain Rot" Content Hits Brand Equity

As low-quality AI content floods platforms, brands cannot afford to ignore the change. When content exceeds the capacity for evaluation, ads follow the flow. Constant exposure to these unstable environments directly damages a brand’s intangible assets, such as its image and heritage.

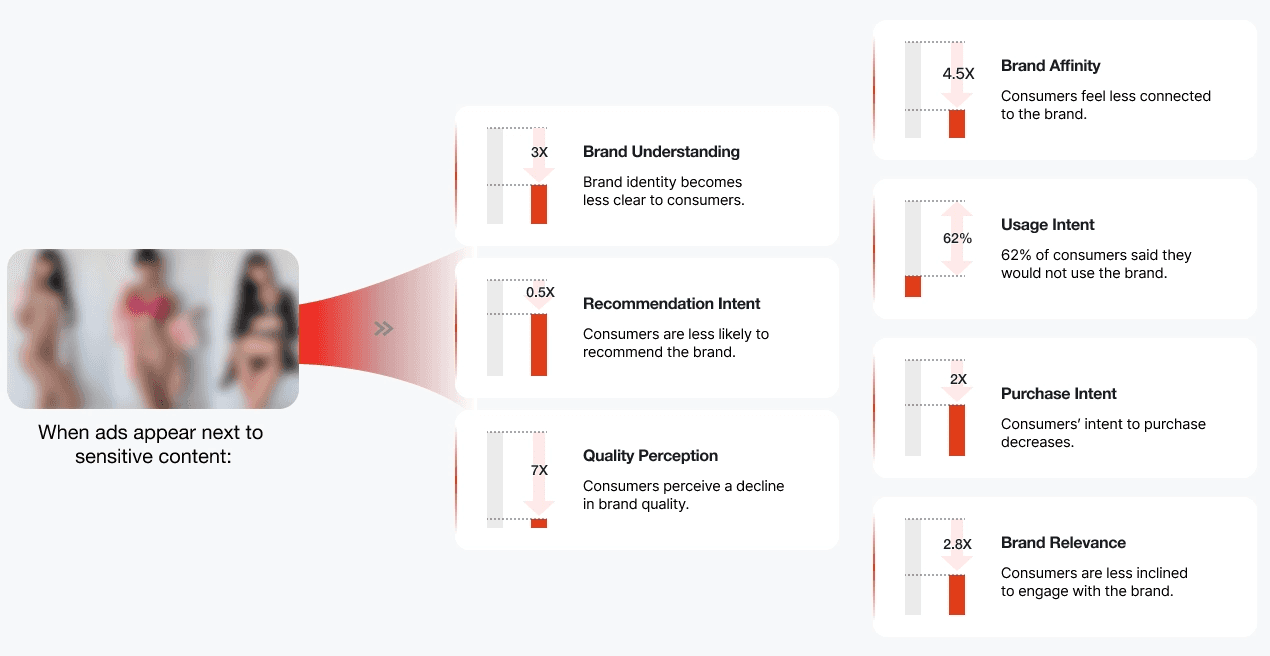

Research makes this risk clear. When ads appear next to sensitive content, perceived brand quality drops sharply—by roughly 7x. Brand affinity falls by 4.5x, and purchase intent is cut in half.

Impact metrics when ads are exposed to sensitive content (Source: Pyler Internal Research & IPG Media Lab)

Brands that judge success solely by views or ad rates face new risks. Advertising now lives in a complex environment where AI-generated noise and misinformation are intertwined.

A New Standard for Trust: Validation Infrastructure

The structural gap between "generation" and "validation" will not close anytime soon.As AI systems expand, content volume will continue to grow exponentially. The responsibility for brands to verify their own ad environments has never been greater.

Content generation has already outpaced the speed of human evaluation. An independent validation layer is no longer optional. It monitors ad environments in real time. It’s essential infrastructure for brand survival.

With over 80% of global data now in video format, simple text filters and metadata analysis are insufficient. We must understand the actual context and message of the video. Deeply analyzing the true intent behind the content is the only way to secure data-driven trust.

In the end, validation is not about finding fault. It is the strongest foundation for protecting brand assets and building a transparent, sustainable marketing environment in the AI era.

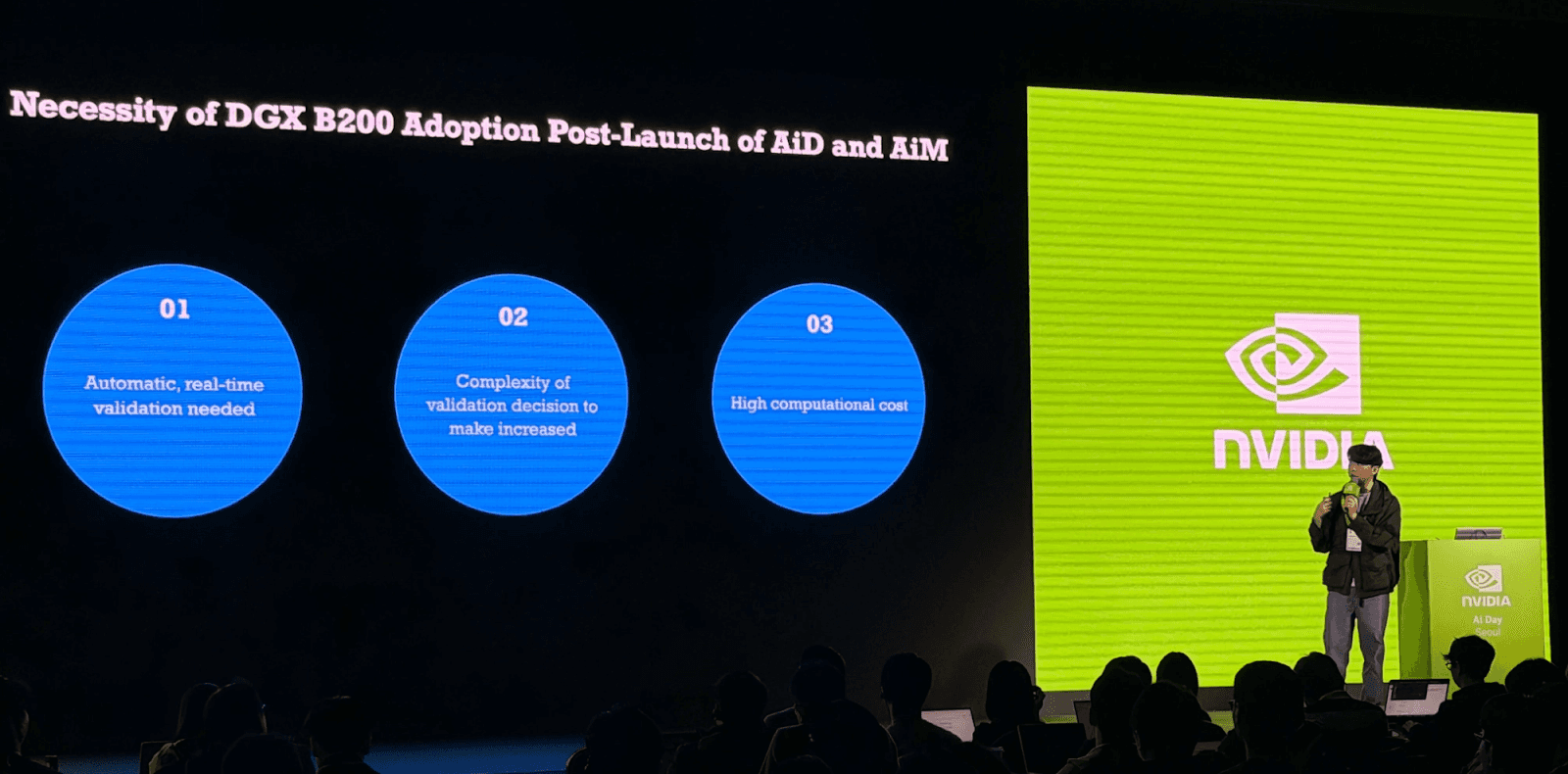

Pyler CEO Jaeho Oh presents on scalable infrastructure for AI validation and brand trust at NVIDIA AI Day Seoul 2025